Chaos poses limitations on predictability, the most cited example is the weather changed by a butterfly.

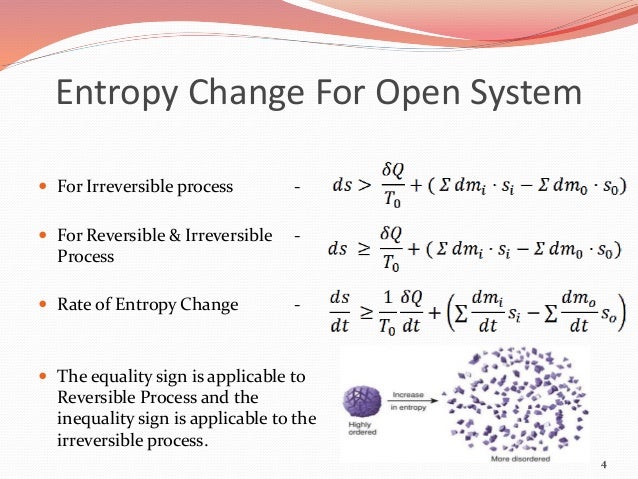

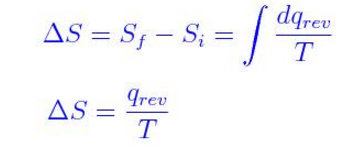

ChaosĮntropy is related to chaos: i) an entropy formula expresses how the value of entropy depends on probabilities of alternative states, ii) the growth in entropy while elementary quantities change to and back, reflects the convexity of it, and iii) chaotic motion is a genuine producer of entropy. The statistics of such dynamic processes stand the trial of time, as they deliver stationary probability density functions. Our own contemporary research reveals that entropy is also useful in the description of processes with dynamic equilibria when the detailed balance – the equity of rates in microscopic changes – fails, but a total balance of pluses and minuses is provided. It makes equilibrium to an optimal state of big systems built from many simple elements by simple rules. Permutation entropy by Ludwig Boltzmann is the logarithm of the number of interchanges on the microscopic level, leaving the macroscopic look unchanged. In energy technology, due to the interpretation of heat as molecular motion, entropy revealed itself as a concept describing complexity in general. Earth and the evolution of Life upon it is not a closed system. Here, the restriction in closed systems is important. The second law of thermodynamics dictates that the total entropy never decreases in a closed system. Entropy is an integral quantity, characterizing the totality of the motion, alike the “profit” characterizes the result of several complex processes in a single number. It describes motion in abstract parameter space, a trend, establishing the asymmetrical nature of the past and future. It was an analogy to “energy”, from the Greek “En-” meaning towards and “Tropos”, a place. The word “entropy” was coined in 1865 by Rudolph Clausius, a German professor of physics. Programmers deal with a particular interpretation of entropy called programming complexity: learn more at our cyclomatic complexity calculator.Tamás Sándor Biró, Vice Director at the Wigner Research Centre for Physics, discusses the current status of entropy formula research From an ecological point of view, it is best if the terrain is species-differentiated.

The higher the entropy of your password, the harder it is to crack.Įcologists use entropy as a diversity measure. It takes into account the number of characters in your password and the pool of unique characters you can choose from (e.g., 26 lowercase characters, 36 alphanumeric characters). It's a measurement of how random a password is. You may also come across the phrase ' password entropy'. In information theory, the entropy symbol is usually the capital Greek letter for ' eta' - H. It's said to have been chosen by Clausius in honor of Sadi Carnot (the father of thermodynamics). In physics and chemistry, the entropy symbol is a capital S. Before, it was known as "equivalence-value". It comes from the Greek "en-" (inside) and "trope" (transformation). The term "entropy" was first introduced by Rudolf Clausius in 1865. Know you know how to calculate Shannon entropy on your own! Keep reading to find out some facts about entropy!

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed